BGP Route Advertisement with Kube-OVN on Harvester

In multi-tenant or hybrid environments, Kubernetes workloads (VMs, pods, and services) need to be reachable from the broader network. The traditional answer is static routes scattered across every upstream router, which breaks as soon as the cluster grows or moves. BGP solves this cleanly : the cluster advertises its own CIDRs dynamically, and every router learns them automatically.

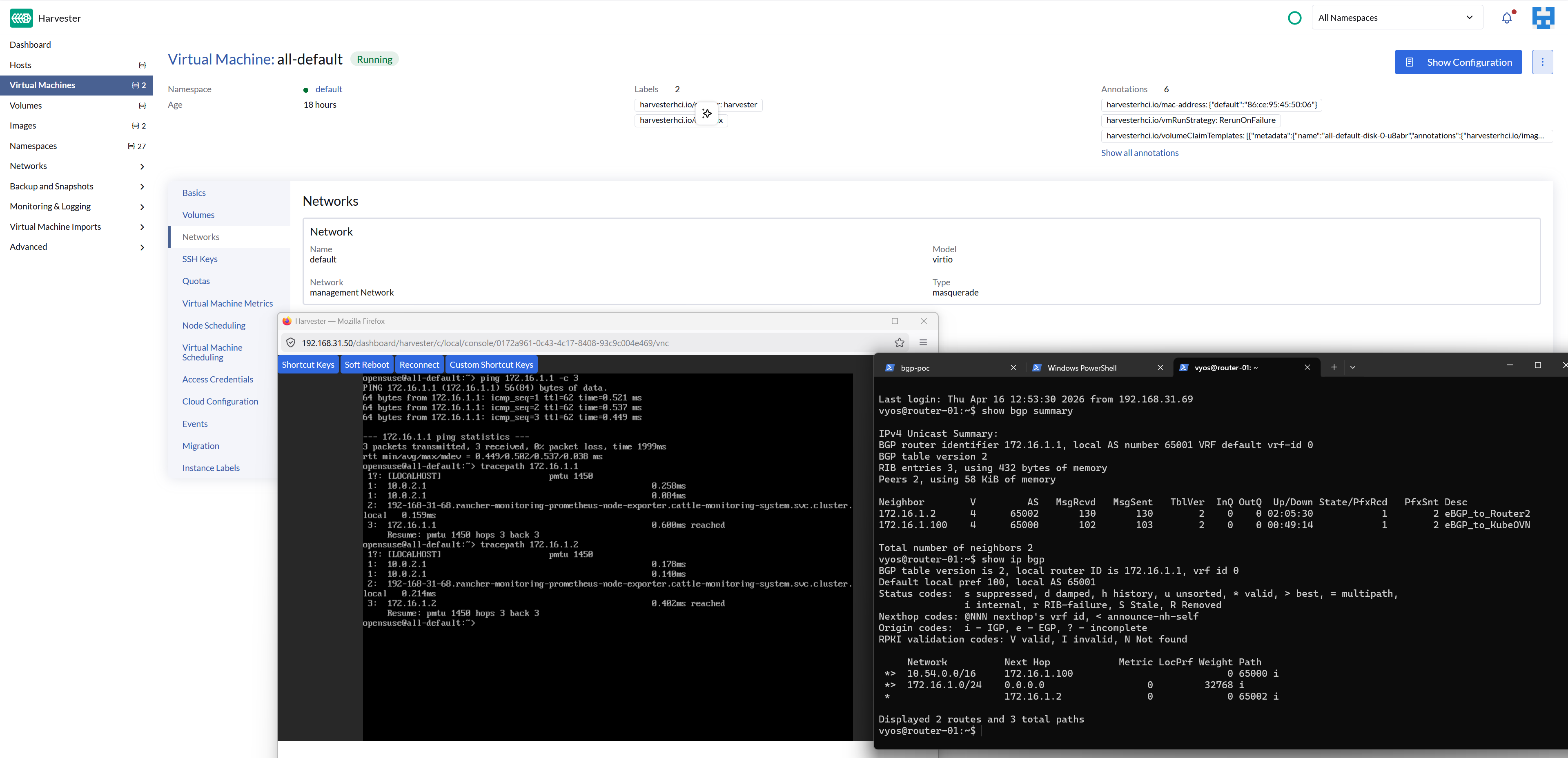

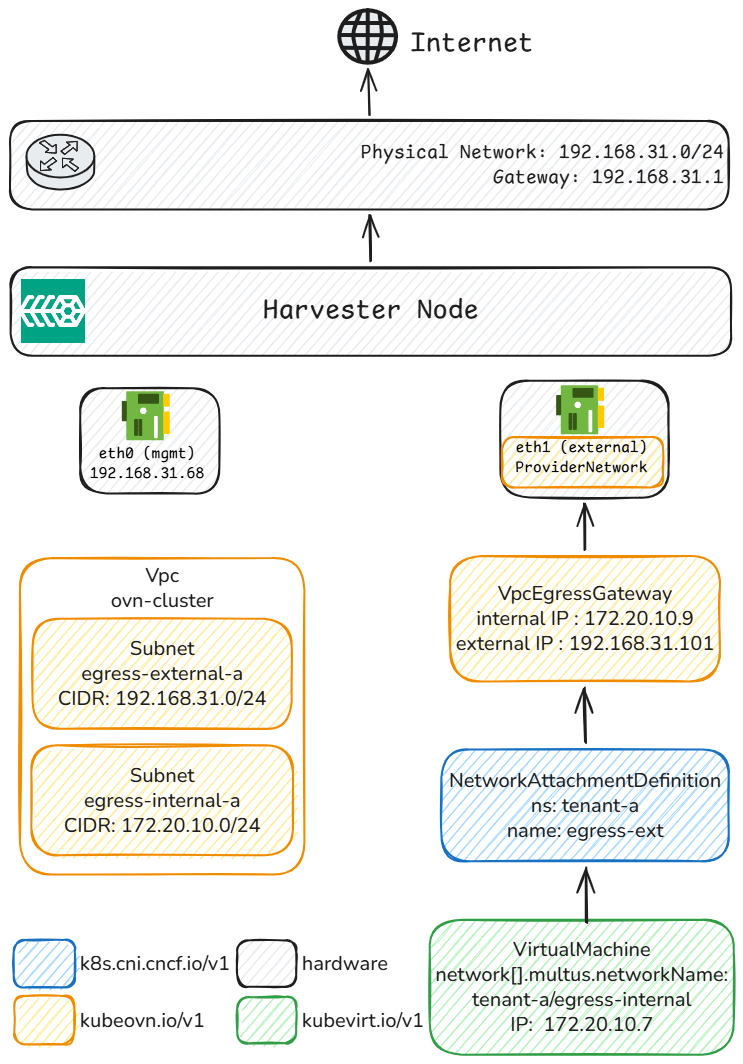

This post documents a proof-of-concept lab that validates BGP route propagation between Kube-OVN's built-in speaker, two VyOS sagitta (1.4.x) routers, and a Harvester cluster, all running as Hyper-V VMs on a single Windows host.

The end-state we are aiming for is a successful ping from router-02 to a Kube-OVN pod IP, with every hop learned via BGP :